How to prevent domain verification bypasses of your server certificates using CAA and account URI binding and how to monitor problems?

In 2023, there was an attack on the Russian chat platform jabber.ru. The attack was going on for half a year from April to October and targeted three servers from the jabber.ru network operated at the hosting providers Hetzner and Linode in Germany. A later analysis showed that the attackers were able to have server certificates issued for hosts and have used these for attacking the communication. The attack was presumably carried out by state actors. How were the attackers able to have certificates issued and how could this have been prevented or at least detected early?

Tl;dr: There is a list of recommended actions at the end of this article.

Many technical details about the jabber.ru attack were published by Valdikss in a blog post. According to this post, the attack was detected when the attackers used an outdated certificate that got presented to users who connected to the Jabber service while at the same time this outdated certificate was not installed on the Jabber server itself. The circumstances suggest that the attackers were able to circumvent the domain validation and to get a valid and trusted certificate from Let’s Encrypt.

Domain validation

Domain validation is the widely used standard approach to verify that an administrator has control over a domain or hostname, for which the admin likes to obtain a server certificate from a certificate authority (CA). Many years ago, when manually acquiring certificates was common, the admin proved control over a domain by receiving an e-mail to a certain e-mail address, like hostmaster@example.org or by being able to write something to the DNS zone. The idea is now being further automated in form of the Automatic Certificate Management Environment (ACME) protocol, a protocol used between certificate management clients and certificate authorities. This ACME protocol contains a challenge-response process to prove administrative control over a domain. This challenge-response works via HTTP or DNS.

If an attacker is able to take over DNS or the server IP address, then domain validation can be circumvented without compromising the server itself. Once an attacker is in a machine-in-the-middle position (MITM) it is possible to obtain a server certificate which is trusted by other clients, for example Jabber clients with regard to the jabber.ru attack. Valdikss’ blog post references certificates which the attackers were able to get for the hosts/domain jabber.ru and xmpp.ru from the certificate authority Let’s Encrypt.

Many organisations use Let’s Encrypt and the ACME protocol to get server certificates for their infrastructure. A MITM position in the network and the redirection of traffic is enough to get a certificate that can be used for further MITM attacks, now complemented with a trusted certificate. This is not a problem of Let's Encrypt. Even if you get certificates from any other CA based on domain validation, someone with control over a server's IP address could have issued their own certificate.

How can this be prevented? Stronger validation concepts like Extended Validation do not really work with more frequent certificate updates in a fully automated cost-free process, where in the end a Jabber client does not care, how a server certificate was created, as long as it is trusted. These extended examinations ultimately failed. Even if you were using Extended Validation to buy a certificate, the Jabber client would still trust the normal server certificate.

CAA and account URI

Let’s have a look at the second question first, while assuming that certificate authorities handle CAA records according to the CA/Browser Forum baseline requirements. In the jabber.ru attack, the attacker also used Let’s Encrypt certificates. So just restricting the CA to Let’s Encrypt does not help.

Now, RFC 8657 enters the stage. It defines record extensions, where the Certification Authority Authorization is not only bound to a specific CA, but to further characteristics. One of these characteristics is the account URI, which is a property that defines a specific Let’s Encrypt account – in form of an URI, hence the name.

According to the ACME protocol (the protocol to get a certificate) as defined in RFC 8555, an ACME client sends a JSON Web Signature (JWS) along with the request that initially creates an account. This JWS contains a public key, which the ACME server stores to verify future requests. The ACME client stores the key and the account URI. For example, if one uses the ACME client dehydrated, then the account URI is stored in /var/lib/dehydrated/accounts/<long-id>/account_id.json. For certbot, there is a command and in general, the account URI can be derived from the ACME account key.

RFC 8657 allows to add an account URI to the CAA issue record and Let’s Encrypt supports this feature for some time now. The above CAA example could be extended like this:

pentagrid.ch. IN CAA 128 issue "letsencrypt.org;accounturi=https://acme-v02.api.letsencrypt.org/acme/acct/1234567890"

If then someone tries to obtain a certificate for a host that is protected with an accounturi record, Let's Encrypt will return an error message, for example "CAA record for caa-test.pentagrid.ch prevents issuance".

Let’s look at the CAA record from jabber.ru (and xmpp.ru). Therdata_257 part refers to the CAA DNS record type. They are using the accounturi property and even included the validation method they use for the ACME protocol.

jabber.ru rdata_257 = 0 issue "letsencrypt.org;validationmethods=dns-01;accounturi=https://acme-v02.api.letsencrypt.org/acme/acct/19431034" jabber.ru rdata_257 = 0 issuewild "letsencrypt.org;validationmethods=dns-01;accounturi=https://acme-v02.api.letsencrypt.org/acme/acct/19431034"

They did not enable the critical flag. Not critical means here, that if a CA does not understand the CAA records issue or issuewild, then the CA is still allowed to issue a certificate. But these records types are so fundamental for CAA, that if a CA does not understand these, it does not understand CAA at all and then it does not matter. The critical flag is more important for upcoming features.

Coming back to the first question, if CAs need to comply to the rules of the CA/Browser Forum. The attack on jabber.ru showed that real Let’s encrypt certificates were used. There was no need to force a CA to silently issue server certificates, because these were simply ordered and fetched via an API and this would work with most server installations out there. If a CA issues real certificates to a technically unauthorized party (it might be a somewhere legally authorized party, which we don't want either), this may work in some cases, but not on a very large scale or better for extended period, because there is a risk that a CA will be excluded from browsers as a trust anchor. For example, this happened 2019 in the Kazakhstan large-scale machine-in-the-middle attack. In 2015 there was the case with the China Internet Network Information Center (CNNIC), which issued an intermediate CA certificate. So it happened in the past, that CAs behave badly. But behaving badly has a price label – for the CA and for those affected.

Certificate Transparency

An approach for transparency is the Certificate Transparency concept, which was born around ten years ago, when there were problems with CA issuing certificates after compromise. The idea is based on a public log of all issued certificates. The concept is specified in RFC 9162. When a client connects to a server via TLS, the client gets an information about the signed certificate timestamp (SCT), which is like a log ID under which a certificate was added to a transparency log. This SCT could be part of the server certificate, added by the CA as a x509v3 extension, or it could be part of an OCSP stapling, or sent during the TLS handshake. Let’s Encrypt uses a x509v3 extension to store the SCT. This means the SCT is stored in the certificate. Google Chrome and Apple Safari browsers require SCT values to be present in order to trust server certificates. (This seems not to be required for certificate issued by internal CAs.) Dumping the certificate of www.pentagrid.ch with openssl x509, it looks like this:

Certificate:

[…]

Issuer: C = US, O = Let's Encrypt, CN = R3

[…]

Subject: CN = www.pentagrid.ch

[…]

X509v3 extensions:

X509v3 Key Usage: critical

Digital Signature

X509v3 Extended Key Usage:

TLS Web Server Authentication, TLS Web Client Authentication

X509v3 Basic Constraints: critical

CA:FALSE

X509v3 Subject Key Identifier:

FF:74:9B:23:29:34:B9:C8:90:0C:0B:5B:AE:D2:8D:2A:29:98:B7:9E

X509v3 Authority Key Identifier:

14:2E:B3:17:B7:58:56:CB:AE:50:09:40:E6:1F:AF:9D:8B:14:C2:C6

Authority Information Access:

OCSP - URI:http://r3.o.lencr.org

CA Issuers - URI:http://r3.i.lencr.org/

X509v3 Subject Alternative Name:

DNS:pentagrid.ch, DNS:www.pentagrid.ch

X509v3 Certificate Policies:

Policy: 2.23.140.1.2.1

CT Precertificate SCTs:

Signed Certificate Timestamp:

Version : v1 (0x0)

Log ID : 3B:53:77:75:3E:2D:B9:80:4E:8B:30:5B:06:FE:40:3B:

67:D8:4F:C3:F4:C7:BD:00:0D:2D:72:6F:E1:FA:D4:17

Timestamp : Nov 24 01:06:04.371 2023 GMT

Extensions: none

Signature : […]

Signed Certificate Timestamp:

Version : v1 (0x0)

Log ID : 48:B0:E3:6B:DA:A6:47:34:0F:E5:6A:02:FA:9D:30:EB:

1C:52:01:CB:56:DD:2C:81:D9:BB:BF:AB:39:D8:84:73

Timestamp : Nov 24 01:06:04.372 2023 GMT

Extensions: none

Signature : […]

[…]

The output above shows that the pre-certificate (some kind of artifact during the certificate generation) was logged to two different logs. Looking up the certificate via crt.sh shows which logs were used. The first log entry refers to a log named "Let's Encrypt Oak 2024h1” and the second entry to a log named "DigiCert Yeti 2024".

By the way, CA/Browser Forum Baseline Requirements do not require a CA to log to a CT log.

Crt.sh and similar platforms and the certspotter tool

Certificate transparency logs are a huge pile of data. They can be queried via HTTP requests that are specified in RFC 9162. Everyone can operate their own monitor to consume the data and look for certificates of interest while checking the consistency of logs via cryptographic means. Such a monitoring tool is the open source tool certspotter. Since monitoring these logs is resource consuming, there are additional platforms which monitor several transparency logs at once while providing a convenient API to query logs. Known platforms are crt.sh by Sectigo and Cert Spotter by sslmate. The latter is based on the certspotter tool mentioned above, but there are dozens of other services, many of them commercial. Certificate transparency logs and services that provide convenient API access must be seen as trusted parties, if one relies on them for monitoring or checking certificates. These processing services are not necessarily up to date. At the time of playing around with logs, a certificate wasn’t found at crt.sh, which was issued weeks earlier. The portal crt.sh shows the status of logs they consume. So it is at least transparent.

Certspotter is a Go program which consumes a list of transparency logs. Once started, it looks for certificates issued to observed hosts/domains. It initially consumes much CPU and quite some network bandwidth for a few days, while it does not store much information on the disk. The found certificates are stored in a directory and it is possible to invoke a script for each match. The hostnames of interest are listed in a watch list file. There is a package for the tool in Debian and derived Linux systems for a simplified installation. One can also craft an own list of certificate transparency logs to not rely on another party here, but since logs are split into consumable pieces per quarter of a year, it would mean a maintenance effort to keep the meta-data up to date.

If one uses these (commercial) platforms to process certificate transparency logs, would that help in a case like the jabber.ru incident? Would they have raised a warning? Likely not, there was nothing wrong with the Let's encrypt certificate. These tools do not implement heuristics that help detect odd certificates such as looking at the issue timestamps and remaining validity, if it is plausible for the weekly cron job or if it fits to agency working times.

A note on DNSSEC

DNS is insecure and since the Snowden publication about QUANTUMINSERT the existence of a large-scale infrastructure must be assumed that is not only capable of injecting traffic into a TCP session, but also to spoof DNS answers, maybe even useable to bypass domain verification. Therefore, using cryptographic means to protect DNS such as DNSEC is recommended.

Interestingly, the use of DNSSEC (where available for domains) when resolving hostnames is not mandatory for CAs according to the CA/Browser Forum baseline requirements. And RFC 8555, which specifies the ACME protocol, only recommends using it:

An ACME-based CA will often need to make DNS queries, e.g., to validate control of DNS names. Because the security of such validations ultimately depends on the authenticity of DNS data, every possible precaution should be taken to secure DNS queries done by the CA. Therefore, it is RECOMMENDED that ACME-based CAs make all DNS queries via DNSSEC-validating stub or recursive resolvers. This provides additional protection to domains that choose to make use of DNSSEC.

However, Let’s Encrypt uses DNSSEC for the verification of DNS records according to an employee's statement in the Let’s Encrypt forum, where the author raised this question.

For the extra-level of security, you may want to keep your DNSSEC signing keys private and manage DNSSEC on your own. This way, you do not need to trust your DNS hosting service (but get other headaches).

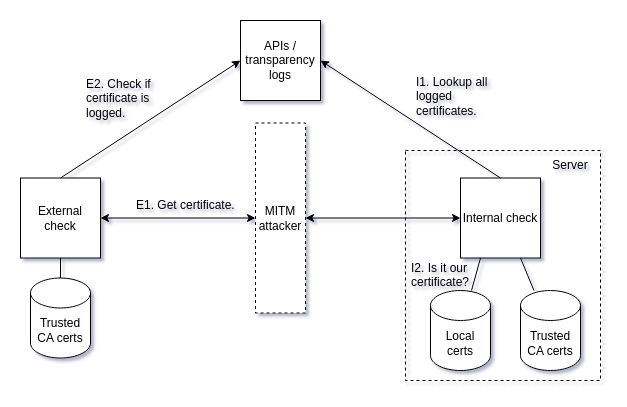

Monitoring idea and implementation

We described a prevention/mitigation approach by using the CAA record in combination with the accounturi property and an iodef record for getting notified, which works as long as a CA follows best practices. It won’t help for rogue CAs. It won’t help for implementation errors anywhere in a distributed system. It won’t help if there is no DNSSEC. And it won’t help if one day so-called qualified certificates according to eIDAS turn evil and don’t follow standard processes either. Therefore, can certificates be monitored to detect "forged" ones? It should be possible by looking from the inside and the outside.

External check: Verify that the server-presented certificate is in a transparency log.

Steps:

Connect to server and get the certificate(s) (E1).

Is the leaf certificate from an expected and trusted CA?

Does the leaf certificate have one or more signed certificate timestamps?

Is it in a transparency log (E2)?

Ideally, this check is run from an unpredictable source IP address (or better via Tor), to reduce the chance of not being redirected in a MITM attack, if an attacker has some capabilities of selecting or omitting sources. The test should ensure that the server presents a certificate that is from an expected and trusted CA. This check does not require using a CAA DNS record. By selecting an expected trust anchor (aka. CA certificate or an intermediate CA certificate), the check will reveal a server certificate issued by an untrusted or unexpected CA. The check will not verify that the certificate was issued to the real service admin like in the jabber.ru case. There could still be a case of domain validation bypass. The check for presence in the certificate log allows to check from the internal side later on.

Internal check: Verify that all certificates in the transparency log are known to belong to the service.

Steps:

Get all certificates from a transparency log matching an own hostname (I1).

Check if certificates are known locally on the service host, i.e. compare them to certificates that a host uses or used before (I2).

The ACME client may have copies of some previously obtained server certificates, which can be used for comparison. At least there is one current certificate on the server. Every certificate on the local filesystem is one that was retrieved by the ACME client. But the ACME client may not store all certificates ever issued. So, there could be a lack of observability for the past, which must then be accepted as it is.

The checks are more or less straight-forward to implement and we implemented tools for this. The main question is, if one wants to run an own Certificate Transparency monitoring tool or use existing Certificate Transparency APIs for the lookup purpose such as crt.sh. If a monitoring tool should be used, then it makes sense to run it centrally and distribute the data somehow and at regular intervals. In a simple case, it could be a Cron job, which fetches the condensed data from a trusted source.

Both ways – using data from the transparency log monitoring or using an API – are implemented in our tool, but the part of distributing the transparency log monitoring results is left open, because there is likely a different approach for each infrastructure.

The external check tool is a Nagios/Icinga script written in Python. If used as a stand-alone tool, it can also be run somewhere on a Raspberry Pi behind a dial-up line. The script can compare server certificates against a directory of transparency log certificates, a directory of known to be trusted certificates and crt.sh and the free sslmate.com API.

The internal tool is a script that can be run via Cron as well. Even if it is called internal script, it does not need to run on the TLS-enabled server, load-balancer, or reverse proxy, whatever stores the certificate. It is also possible to collect certificates from your TLS-server at a central place and run the internal script centrally. Using this method, there is no need to install tools on a server. It is only important to transfer certificates in a secure manner.

Additional monitoring ideas

A few monitoring ideas are mentioned in Valdikss’ article on the jabber.ru analysis or could be derived from the article. Another article by Hugo Landau, who wrote the earlier mentioned RFC 8657, has also some recommendations. Based on these and own ideas, we implemented two more monitoring scripts.

One script implements a TCP-based traceroute and uses a set of destination ports for the test connection. If the last hops differ against a set of known last hops, then there is something happening – not necessarily a malicious activity, but something that should be checked. Will the script detect any MITM attack? No. It is astonishing that the attack on the affected jabber.ru servers was even recognizable in the traceroute depending on the destination port. A MITM attack doesn't have to be like this. There is no need for another hop to appear in the traceroute.

The other test script implements a TLS server fingerprinting. Salesforce developed a method to fingerprint SSL/TLS clients in 2017, named JA3. The same idea applied to SSL/TLS servers is named JA3S. Salesforce’s implementation is for analysing captured network traffic. Later, Salesforce adopted the idea for active fingerprinting of TLS servers and released a tool called JARM. The idea of JARM is to send a bunch of TLS Client Hello messages with varying TLS extensions and ciphers to an TLS endpoint and to analyse the returned TLS Server Hello messages. JARM extracts the characteristics and creates a hash, which represents a specific TLS implementation and configuration. If a MITM attack does not anticipate this monitoring possibility, there is a chance to detect such an attack. Our derived tool uses the JARM implementation and checks a server against an expected JARM hash.

Our scripts and further documentation are published on Github. The check-transparency-logs scripts are here and the check-mitm scripts are here.

Recommended actions

The important countermeasures are summarized below. Whether you implement monitoring and how far you want to go with it depends on the expected threats. The implementation of the following points is a morning's work (except operating DNSSEC on your own) and should achieve a considerable improvement in the security level.

Enable DNSSEC for your domain(s).

Depending on how you handle certificates in general, you may want to set a more generic CAA issue record for your domain(s) and fixiate the CA(s) you use.

For specific hosts, set (a) CAA issue/issuewild record(s) that includes the accounturi.

If you are worried about MITM attacks, document your SSH server fingerprints as early as possible and consider using SSHFP DNS records.

Set an CAA iodef property for your domain(s) to express a notification wish, but don't expect any reports.

You may want to use our scripts to monitor certificates in addition, but preventive actions are more important.

Be aware that an attacker will detect these measures and will adjust attacks accordingly. Other forms of attack and measures must therefore also be taken into consideration.